Context engineering is the difference between an AI agent that flails and one that ships. But what does “context” actually mean?

You probably don’t realize how much context you have. And you probably don’t realize what context your AI has. At Mechanical Orchard, we pay close attention to this so that our agents stay sharp — because overstuffed context windows degrade output quality, and irrelevant information becomes distracting noise.

This article walks through the Research, Plan, Implement workflow — one picture at a time — to show how context gets built up, compressed, and scoped down across each phase.

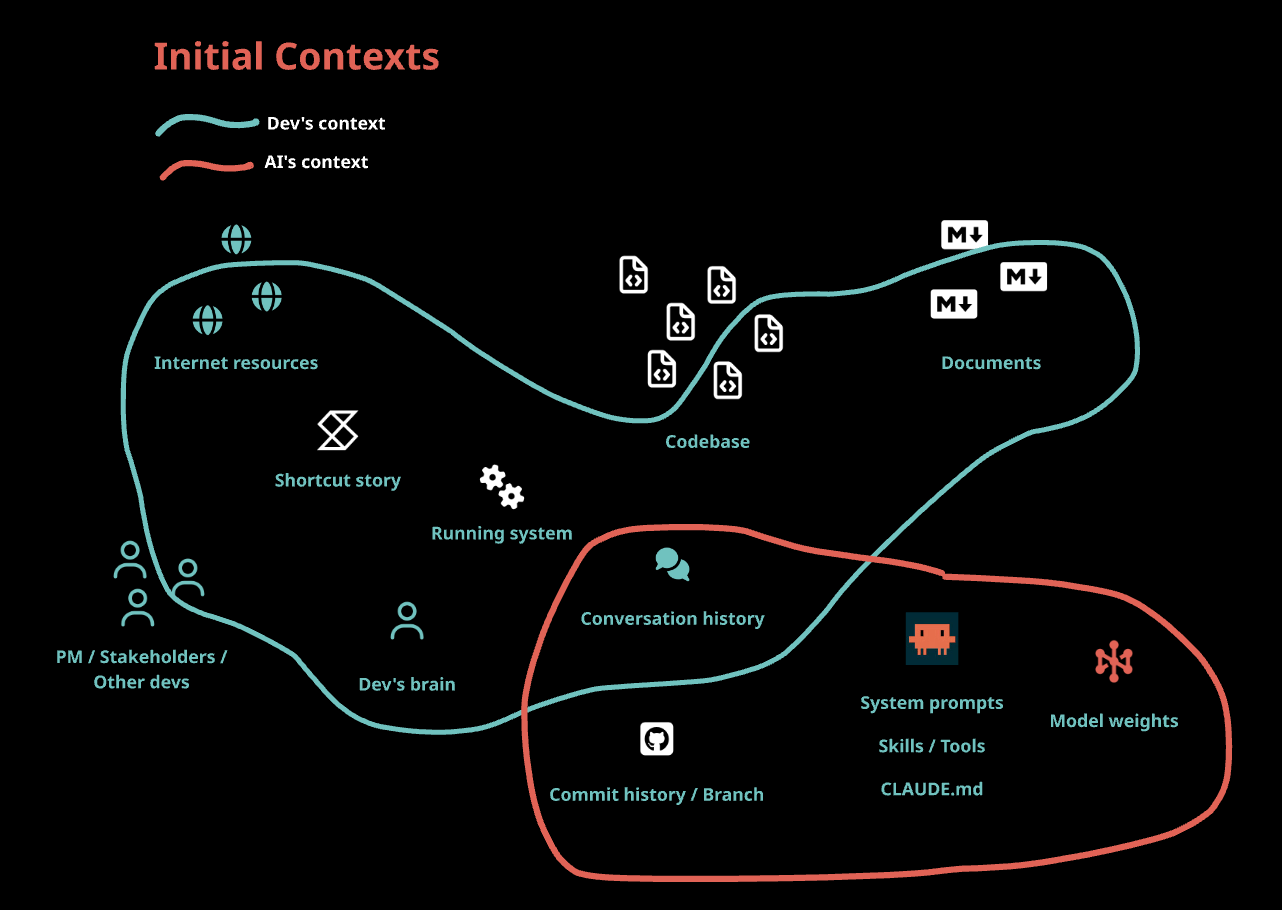

Before Starting Any Work

Here’s everything a dev brings to a task before any AI is involved — and here’s what the AI starts with. Notice the gap — only the conversation history is shared, the AI has no initial knowledge of the code, team conversations, backlog stories or the running system.

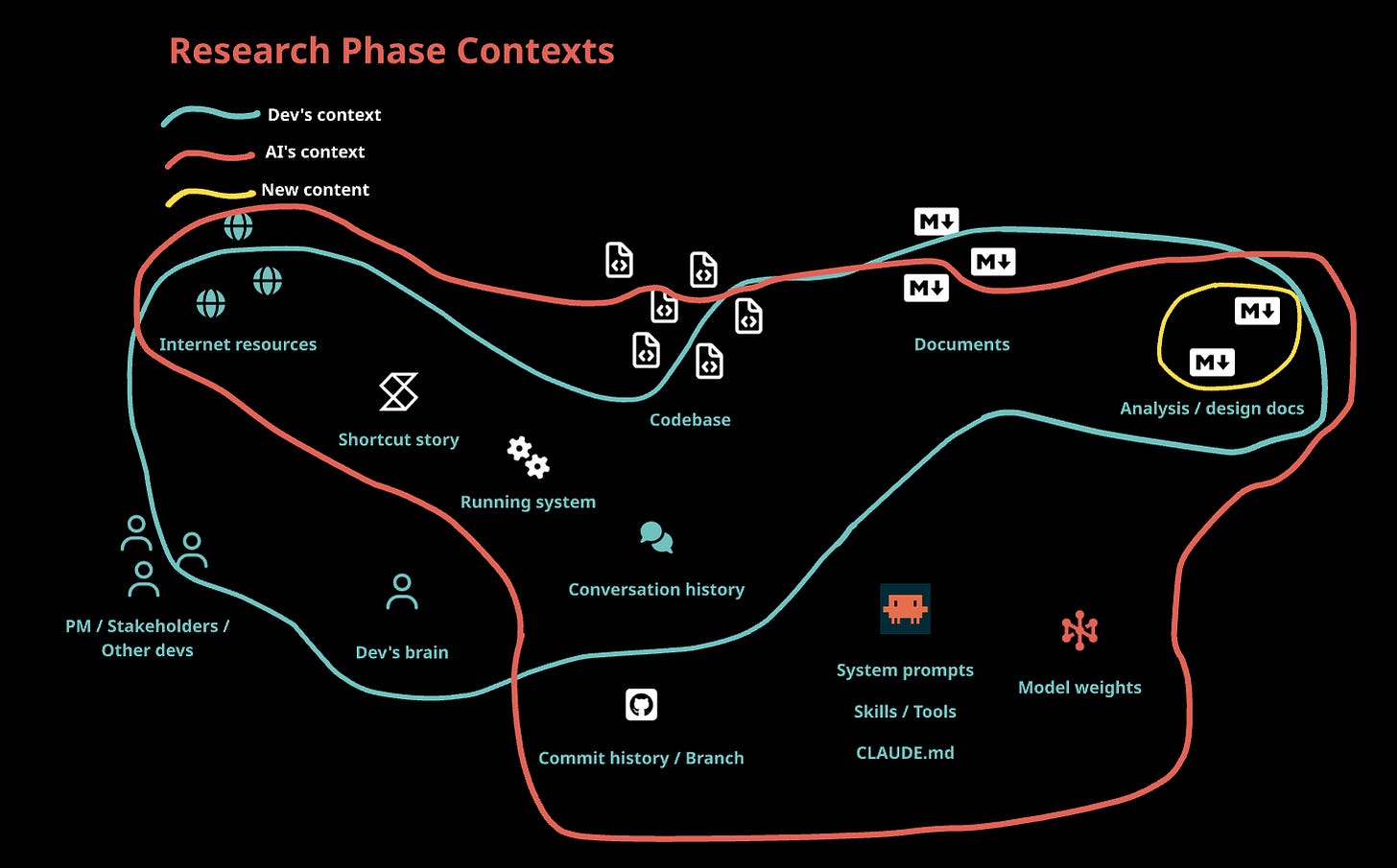

Research Phase

The AI explores the codebase, reads docs, searches the web — often with parallel sub-agents, each with their own scoped context, investigating different areas simultaneously. They can even inspect the running system and run our custom tooling for fully grounded information.

Their summaries get merged into shared artifacts: analysis docs, architecture notes, design specs, patterns found in existing code. Everything needed to break the work into plans without hauling the raw sources forward. The dev reviews these artifacts, not the exploration itself.

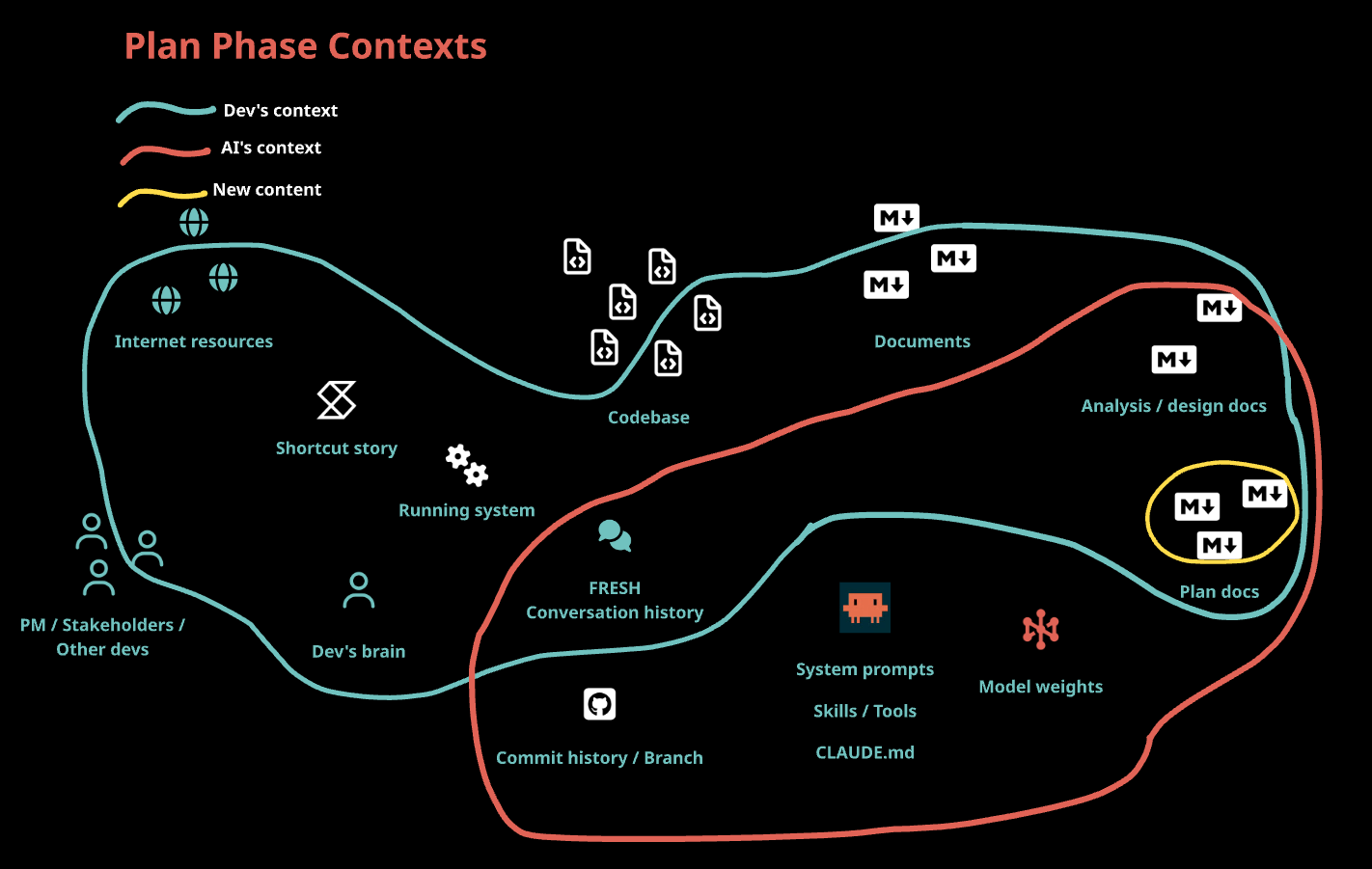

Plan Phase

The AI starts with a fresh context window — the research artifacts carry forward, not the conversation. This is compaction: distilling phases of work into structured documents so the AI gets signal, not noise. From here, the AI produces concrete plan docs — exact steps, exact files, exact verification criteria, and calculates dependencies.

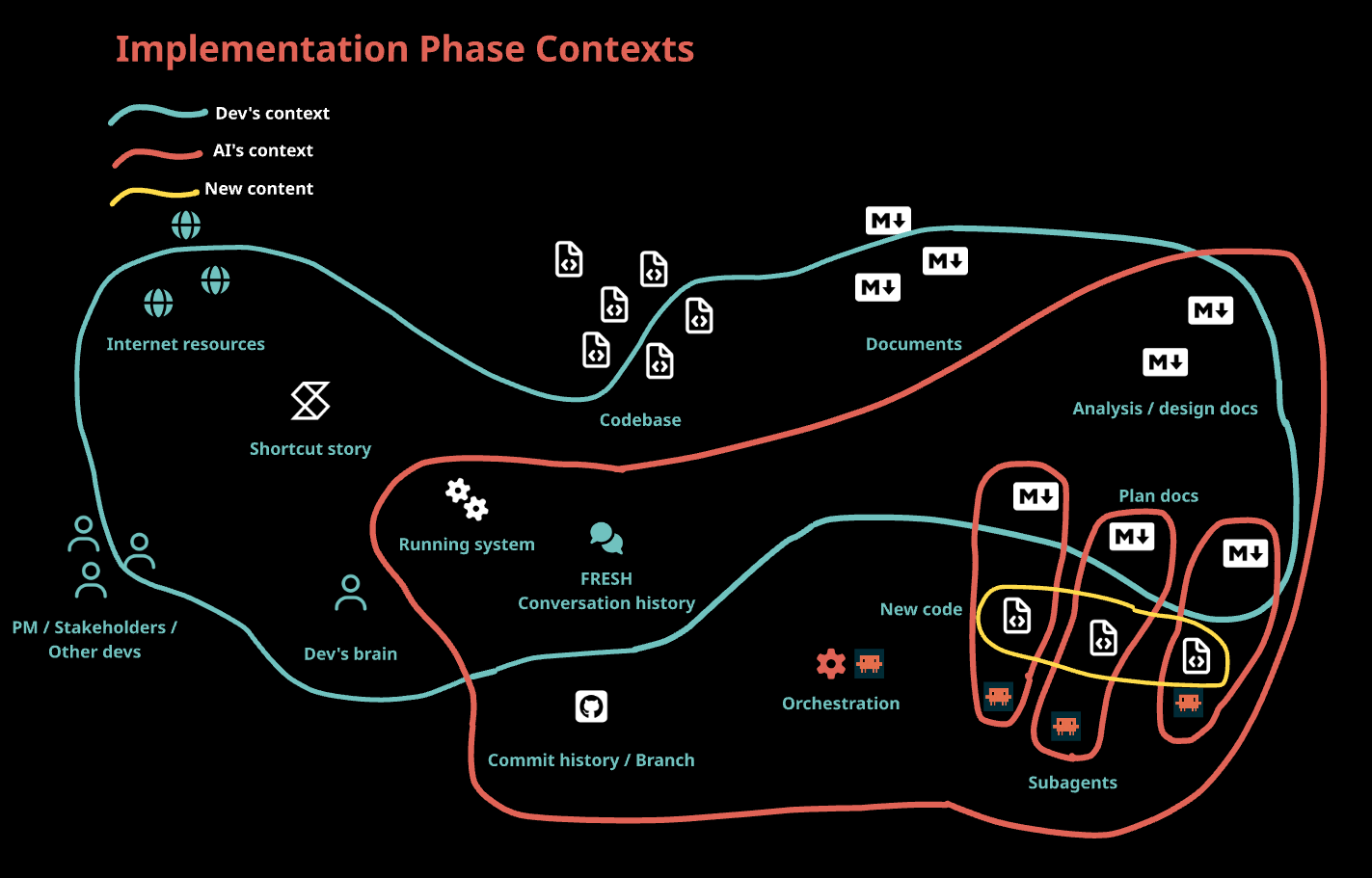

Implement Phase

This is where you want the tightest scope to minimize chances of confusion and maximize the quality of output. Based on the dependency graph from the plan, the orchestrater spawns sub-agents with fresh contexts and just the plan doc for their unit of work — divide and conquer. If the scoping is right, the work will be right. Each agent has everything it needs to do what needs to be done and nothing more. The orchestrating agent makes sure everything was covered, performs validation against the running system, and keeps the dev in the loop.

Kicker

The industry is converging. As of early 2026, nearly every major framework and tool has landed on some version of this same phased workflow:

Human Layer originated the Research-Plan-Implement pattern and Frequent Intentional Compaction

Superpowers has a similar workflow and document generating strategy

Ralph loops rely on tightly scoped units of work from a planning phase

Claude code recently started defaulting to “Clear context and implement” after Plan mode

The big players are following suit. Anthropic, OpenAI, Stripe and many more are following these patterns in their autonomous agent coding systems. At Mechanical Orchard, we have our own AI orchestration systems built on these principles.

Context engineering isn’t about writing better prompts. It’s about what the AI sees at each step — and just as importantly, what it doesn’t.